Google TurboQuant Explained: Lower Memory Use, Big Impact on AI Industry

- byAdmin

- | UPDATED: 26 Mar, 11:45 am IST

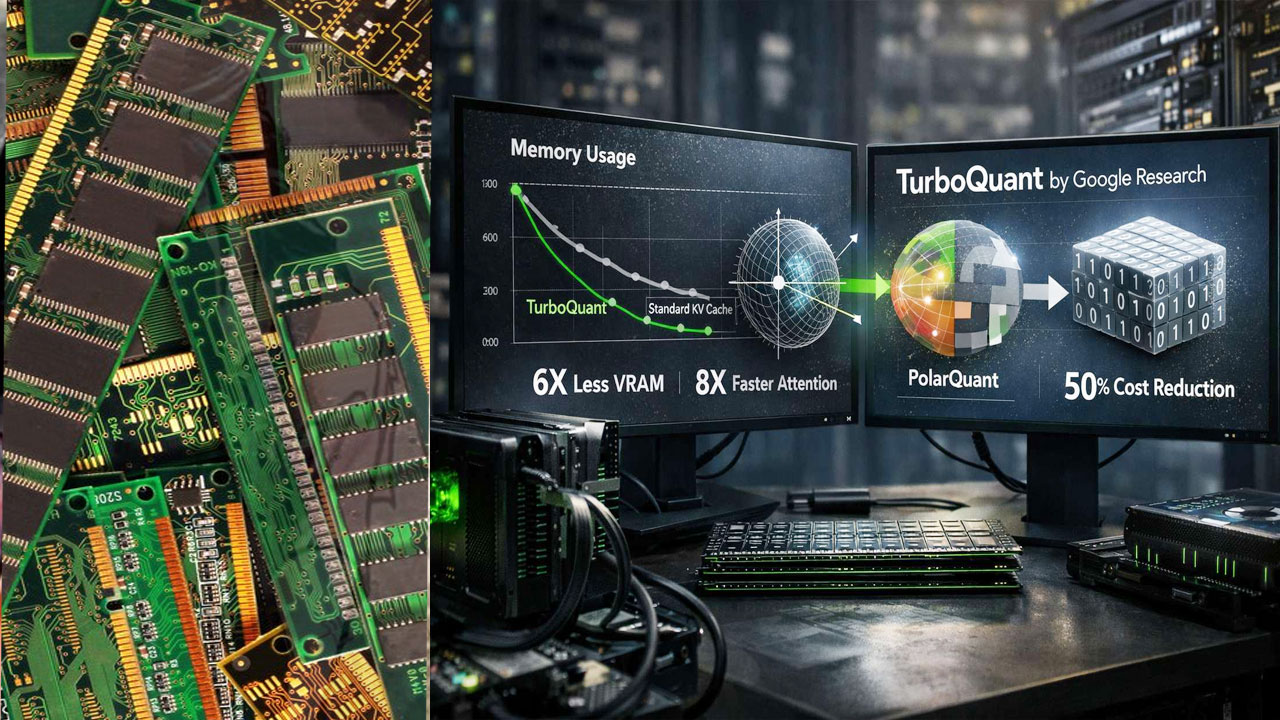

Google TurboQuant AI Memory Optimization Technology

Google has introduced TurboQuant, a new compression algorithm aimed at improving the efficiency of artificial intelligence systems. The announcement led to a decline in shares of several major memory chip manufacturers on Wall Street.

According to Google, TurboQuant is designed to optimize how data is stored in key-value (KV) cache, enabling AI systems to operate more efficiently while reducing the need for high memory capacity.

Following the announcement, shares of SanDisk fell by up to 4%, while Micron Technology dropped 3.4%. Western Digital declined by over 1%, and Seagate Technology slipped nearly 3%, according to market data. Meanwhile, the Nasdaq 100 index ended the session 0.7% higher.

The TurboQuant system operates in two stages. Initially, PolarQuant compresses data by rotating vectors to improve efficiency. This is followed by the Quantized Johnson-Lindenstrauss (QJL) algorithm, which minimizes remaining errors. Google noted that older methods required additional memory bits, limiting compression efficiency.

Algorithmic Advancements

Google evaluated TurboQuant using multiple benchmarks, including LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval, with models such as Gemma and Mistral. The company reported improvements in accuracy, recall, and reduced KV memory usage, outperforming earlier techniques like PolarQuant and KIVI across tasks such as question answering, coding, and summarisation.

Google emphasized that TurboQuant and related methods represent not only practical tools but also significant algorithmic advancements with strong theoretical foundations.

“These methods are provably efficient and operate near theoretical lower bounds, making them reliable for large-scale AI systems,” the company stated.

The technology is expected to play a key role in enhancing semantic search and large language model performance. Google added that reduced memory usage and minimal preprocessing requirements will make AI systems faster and more efficient as adoption continues to grow across products and services.

Post a comment